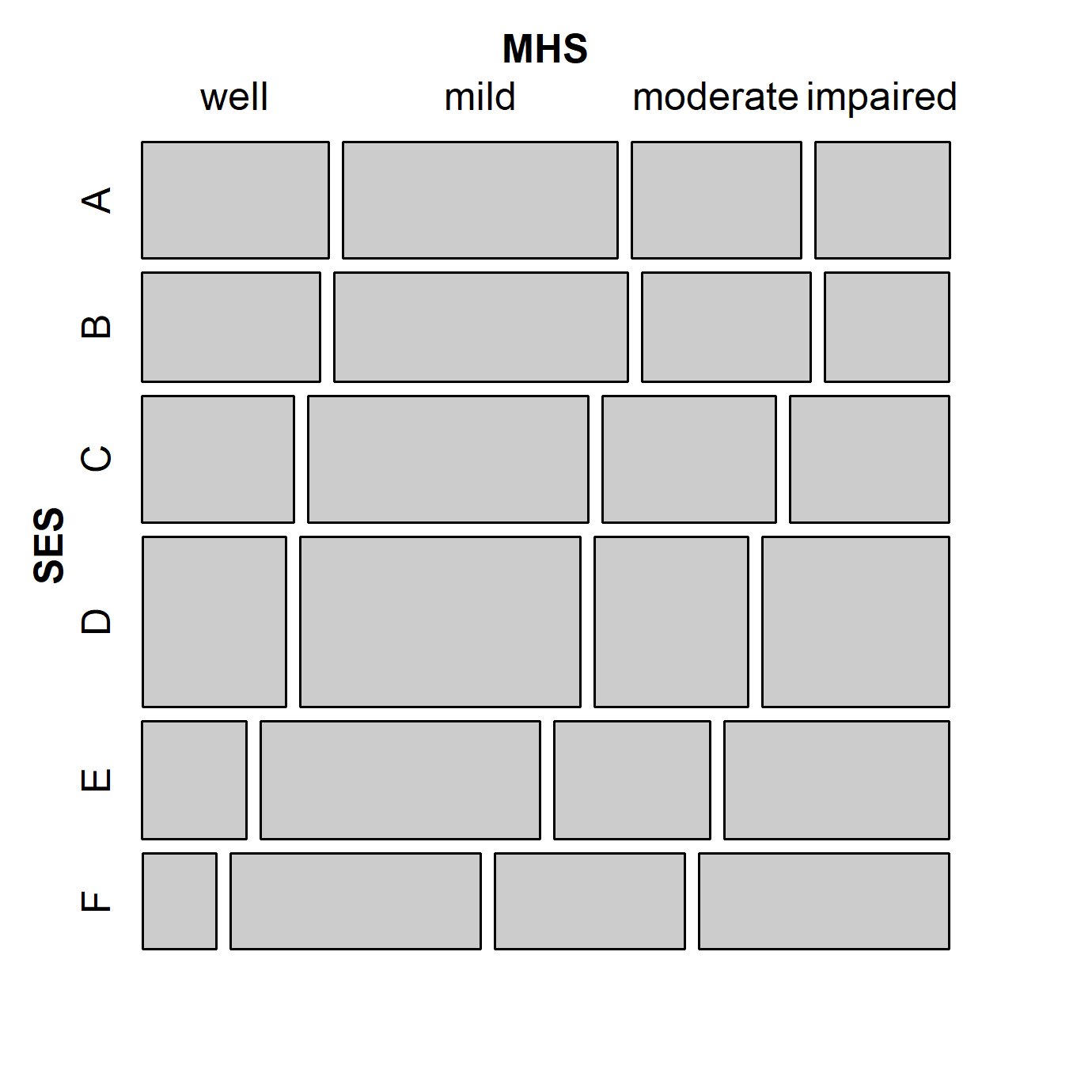

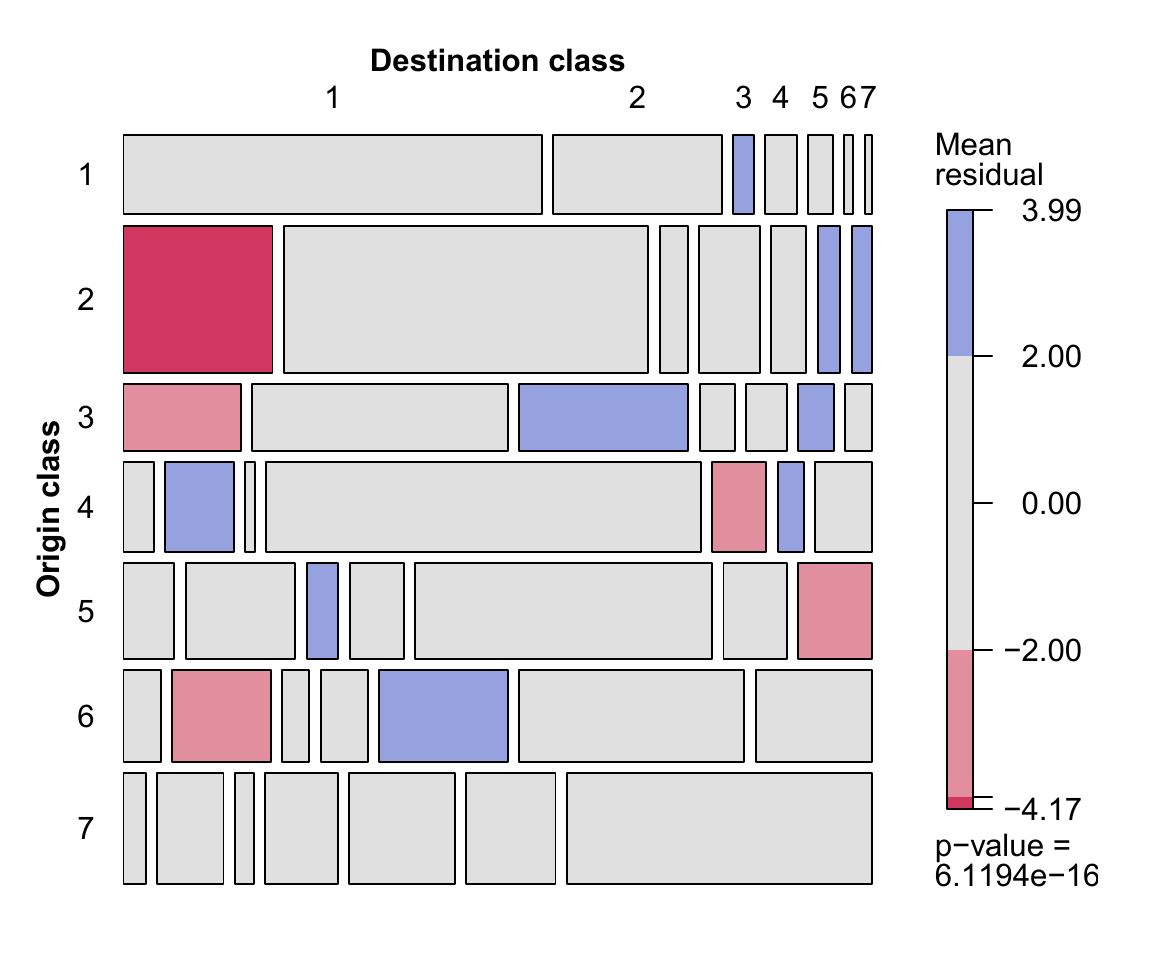

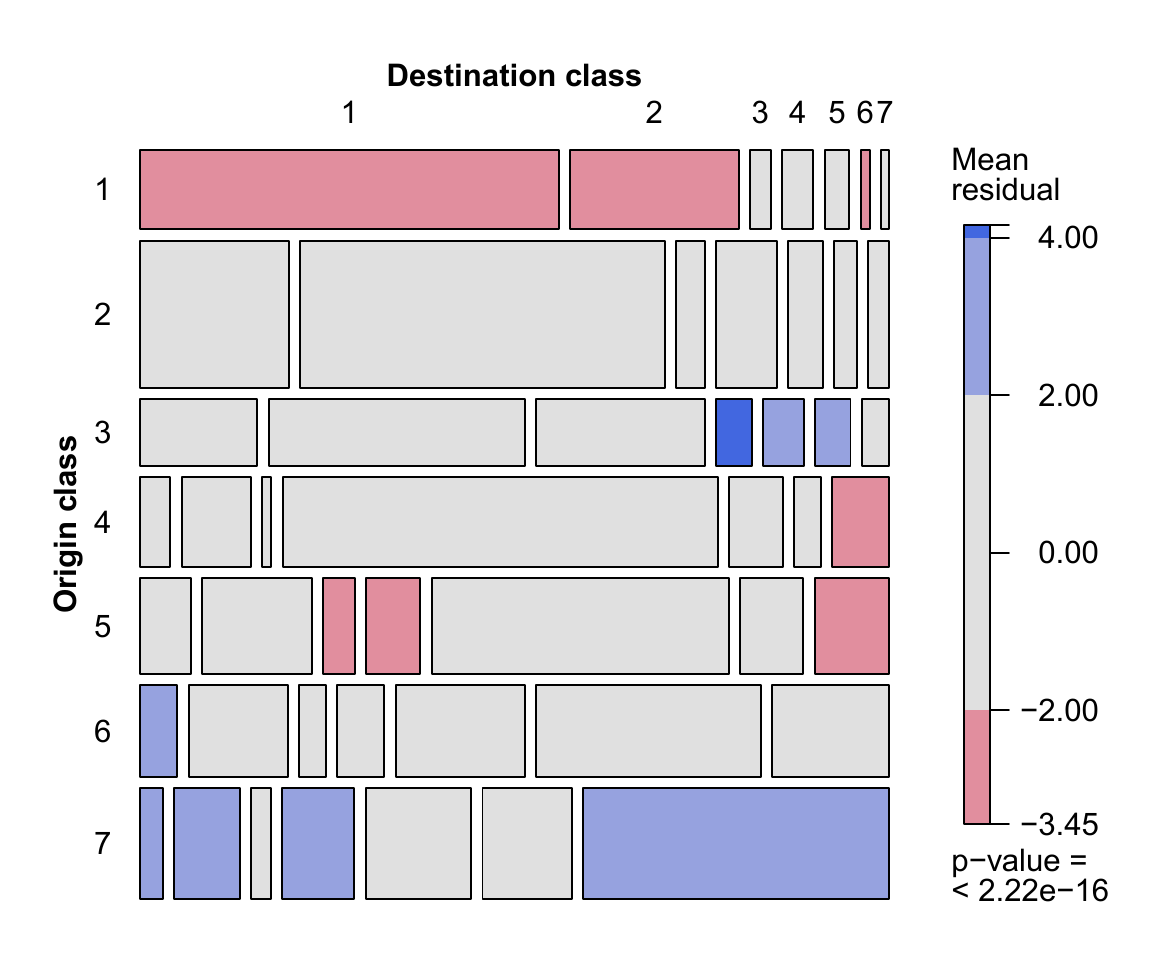

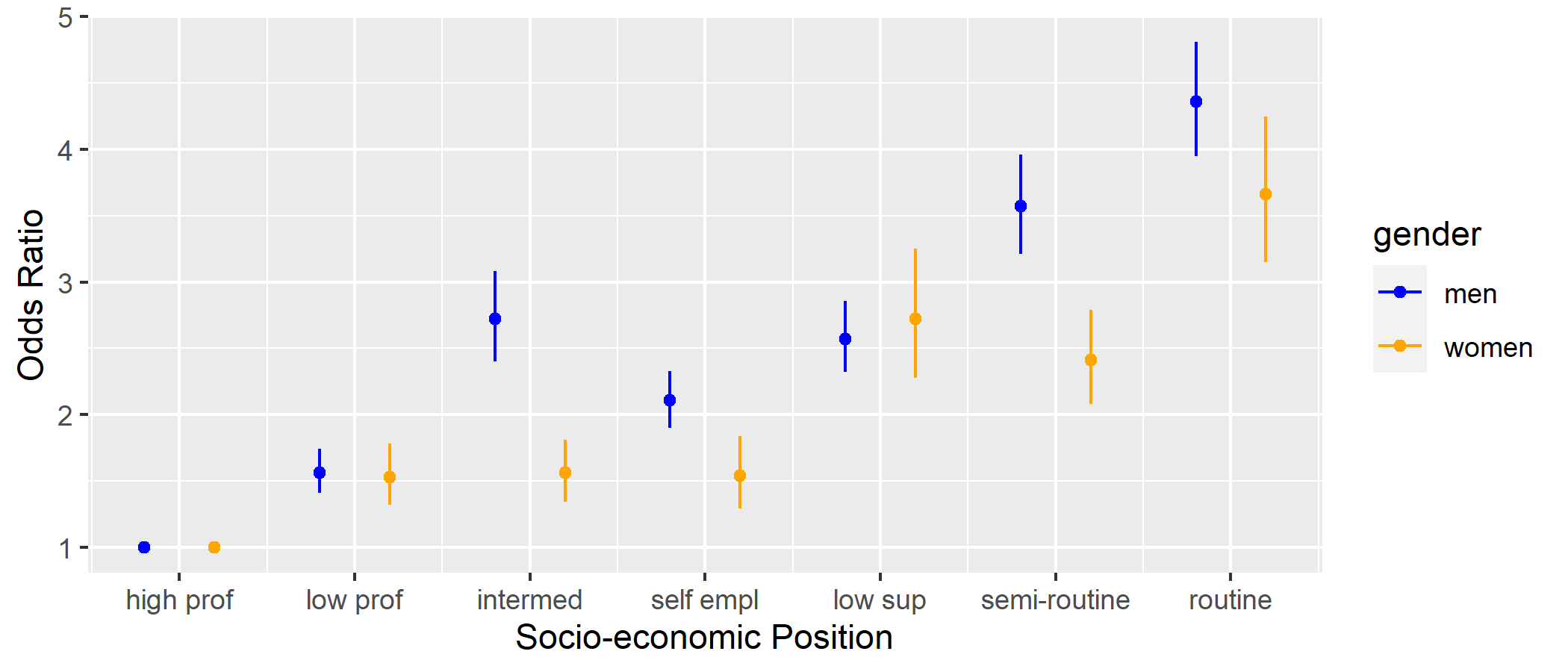

class: center, middle, inverse, title-slide # Structured Effects with Generalized Nonlinear Models ### <span style="font-size:40px;font-weight:600">Heather Turner<br> Research Software Engineering Fellow<br>University of Warwick</span> ### <svg aria-hidden="true" role="img" viewBox="0 0 512 512" style="height:1em;width:1em;vertical-align:-0.125em;margin-left:auto;margin-right:auto;font-size:inherit;fill:currentColor;overflow:visible;position:relative;"><path d="M459.37 151.716c.325 4.548.325 9.097.325 13.645 0 138.72-105.583 298.558-298.558 298.558-59.452 0-114.68-17.219-161.137-47.106 8.447.974 16.568 1.299 25.34 1.299 49.055 0 94.213-16.568 130.274-44.832-46.132-.975-84.792-31.188-98.112-72.772 6.498.974 12.995 1.624 19.818 1.624 9.421 0 18.843-1.3 27.614-3.573-48.081-9.747-84.143-51.98-84.143-102.985v-1.299c13.969 7.797 30.214 12.67 47.431 13.319-28.264-18.843-46.781-51.005-46.781-87.391 0-19.492 5.197-37.36 14.294-52.954 51.655 63.675 129.3 105.258 216.365 109.807-1.624-7.797-2.599-15.918-2.599-24.04 0-57.828 46.782-104.934 104.934-104.934 30.213 0 57.502 12.67 76.67 33.137 23.715-4.548 46.456-13.32 66.599-25.34-7.798 24.366-24.366 44.833-46.132 57.827 21.117-2.273 41.584-8.122 60.426-16.243-14.292 20.791-32.161 39.308-52.628 54.253z"/></svg> <a href="https://twitter.com/heathrturnr">@HeathrTurnr</a><br><br>24 November 2021<br><br><span style=“font-size:50px;font-weight:600”;><svg aria-hidden="true" role="img" viewBox="0 0 512 512" style="height:1em;width:1em;vertical-align:-0.125em;margin-left:auto;margin-right:auto;font-size:inherit;fill:currentColor;overflow:visible;position:relative;"><path d="M326.612 185.391c59.747 59.809 58.927 155.698.36 214.59-.11.12-.24.25-.36.37l-67.2 67.2c-59.27 59.27-155.699 59.262-214.96 0-59.27-59.26-59.27-155.7 0-214.96l37.106-37.106c9.84-9.84 26.786-3.3 27.294 10.606.648 17.722 3.826 35.527 9.69 52.721 1.986 5.822.567 12.262-3.783 16.612l-13.087 13.087c-28.026 28.026-28.905 73.66-1.155 101.96 28.024 28.579 74.086 28.749 102.325.51l67.2-67.19c28.191-28.191 28.073-73.757 0-101.83-3.701-3.694-7.429-6.564-10.341-8.569a16.037 16.037 0 0 1-6.947-12.606c-.396-10.567 3.348-21.456 11.698-29.806l21.054-21.055c5.521-5.521 14.182-6.199 20.584-1.731a152.482 152.482 0 0 1 20.522 17.197zM467.547 44.449c-59.261-59.262-155.69-59.27-214.96 0l-67.2 67.2c-.12.12-.25.25-.36.37-58.566 58.892-59.387 154.781.36 214.59a152.454 152.454 0 0 0 20.521 17.196c6.402 4.468 15.064 3.789 20.584-1.731l21.054-21.055c8.35-8.35 12.094-19.239 11.698-29.806a16.037 16.037 0 0 0-6.947-12.606c-2.912-2.005-6.64-4.875-10.341-8.569-28.073-28.073-28.191-73.639 0-101.83l67.2-67.19c28.239-28.239 74.3-28.069 102.325.51 27.75 28.3 26.872 73.934-1.155 101.96l-13.087 13.087c-4.35 4.35-5.769 10.79-3.783 16.612 5.864 17.194 9.042 34.999 9.69 52.721.509 13.906 17.454 20.446 27.294 10.606l37.106-37.106c59.271-59.259 59.271-155.699.001-214.959z"/></svg> <a href="heatherturner.net/talks/uRos2021">heatherturner.net/talks/uRos2021</a></span> --- layout: true .footer[<svg aria-hidden="true" role="img" viewBox="0 0 512 512" style="height:1em;width:1em;vertical-align:-0.125em;margin-left:auto;margin-right:auto;font-size:inherit;fill:currentColor;overflow:visible;position:relative;"><path d="M326.612 185.391c59.747 59.809 58.927 155.698.36 214.59-.11.12-.24.25-.36.37l-67.2 67.2c-59.27 59.27-155.699 59.262-214.96 0-59.27-59.26-59.27-155.7 0-214.96l37.106-37.106c9.84-9.84 26.786-3.3 27.294 10.606.648 17.722 3.826 35.527 9.69 52.721 1.986 5.822.567 12.262-3.783 16.612l-13.087 13.087c-28.026 28.026-28.905 73.66-1.155 101.96 28.024 28.579 74.086 28.749 102.325.51l67.2-67.19c28.191-28.191 28.073-73.757 0-101.83-3.701-3.694-7.429-6.564-10.341-8.569a16.037 16.037 0 0 1-6.947-12.606c-.396-10.567 3.348-21.456 11.698-29.806l21.054-21.055c5.521-5.521 14.182-6.199 20.584-1.731a152.482 152.482 0 0 1 20.522 17.197zM467.547 44.449c-59.261-59.262-155.69-59.27-214.96 0l-67.2 67.2c-.12.12-.25.25-.36.37-58.566 58.892-59.387 154.781.36 214.59a152.454 152.454 0 0 0 20.521 17.196c6.402 4.468 15.064 3.789 20.584-1.731l21.054-21.055c8.35-8.35 12.094-19.239 11.698-29.806a16.037 16.037 0 0 0-6.947-12.606c-2.912-2.005-6.64-4.875-10.341-8.569-28.073-28.073-28.191-73.639 0-101.83l67.2-67.19c28.239-28.239 74.3-28.069 102.325.51 27.75 28.3 26.872 73.934-1.155 101.96l-13.087 13.087c-4.35 4.35-5.769 10.79-3.783 16.612 5.864 17.194 9.042 34.999 9.69 52.721.509 13.906 17.454 20.446 27.294 10.606l37.106-37.106c59.271-59.259 59.271-155.699.001-214.959z"/></svg> [heatherturner.net/talks/uRos2021](https://www.heatherturner.net/talks/uRos2021) <svg aria-hidden="true" role="img" viewBox="0 0 512 512" style="height:1em;width:1em;vertical-align:-0.125em;margin-left:auto;margin-right:auto;font-size:inherit;fill:currentColor;overflow:visible;position:relative;"><path d="M459.37 151.716c.325 4.548.325 9.097.325 13.645 0 138.72-105.583 298.558-298.558 298.558-59.452 0-114.68-17.219-161.137-47.106 8.447.974 16.568 1.299 25.34 1.299 49.055 0 94.213-16.568 130.274-44.832-46.132-.975-84.792-31.188-98.112-72.772 6.498.974 12.995 1.624 19.818 1.624 9.421 0 18.843-1.3 27.614-3.573-48.081-9.747-84.143-51.98-84.143-102.985v-1.299c13.969 7.797 30.214 12.67 47.431 13.319-28.264-18.843-46.781-51.005-46.781-87.391 0-19.492 5.197-37.36 14.294-52.954 51.655 63.675 129.3 105.258 216.365 109.807-1.624-7.797-2.599-15.918-2.599-24.04 0-57.828 46.782-104.934 104.934-104.934 30.213 0 57.502 12.67 76.67 33.137 23.715-4.548 46.456-13.32 66.599-25.34-7.798 24.366-24.366 44.833-46.132 57.827 21.117-2.273 41.584-8.122 60.426-16.243-14.292 20.791-32.161 39.308-52.628 54.253z"/></svg> [@HeathrTurnr](https://twitter.com/heathrturnr)] --- # Overview * Introduction to Generalized Nonlinear Models and the `gnm` package * Case study of a structured main effect: *Modelling the Consequences of Social Mobility with the Diagonal Reference Model* * Case study of a structured interaction: *Modelling Mortality Trends with the Lee-Carter Model* --- class: inverse middle # Introduction to GNMs and the <tt>gnm</tt> package --- # Linear models `\(\newcommand{\stext}[1]{{\style{font-family:inherit}{\text{#1}}}}\)` $$ \hat{y_i} = \beta_0 + \beta_1 x_i + \beta_2 z_i +\epsilon_i $$ -- $$ E(y_i) = \beta_0 + \exp(\theta_1) x_i + \beta_2 x_i^2 $$ -- In general $$ E(y_i) = \eta_i(\beta) = \stext{linear function of unknown parameters} $$ Also assumes variance essentially constant: $$ \text{Var}(y_i) = \phi a_i $$ with `\(a_i\)` known (often `\(a_i\equiv 1\)`). --- # Generalized linear models Problems with linear models in many applications: * range of `\(y\)` is restricted (e.g., `\(y\)` is a count, or is binary, or is a duration) * effects are not additive * variance depends on mean (e.g., large mean `\(\Rightarrow\)` large variance) *Generalized* linear models specify a non-linear *link function* and *variance function* to allow for such things, while maintaining the simple interpretation of linear models. --- # GLM structure A GLM is made up of a *linear predictor* `$$\eta = \beta_0 + \beta_1 x_{1} + ... + \beta_p x_{p}$$` a *link function* that describes how `\(E(Y) = \mu\)` depends on `\(\eta\)` `$$g(\mu) = \eta$$` a *variance function* that describes how `\(\text{Var}(Y)\)` depends on `\(\mu\)` `$$\text{Var}(Y) = \phi a_i V(\mu)$$` where the *dispersion parameter* `\(\phi\)` is a constant and `\(a_i\)` are known values. --- # Logistic regression `\(\newcommand{\stext}[1]{{\style{font-family:inherit}{\text{#1}}}}\)` Suppose proportions `\(Y_i/n_i\)` with `\(Y_i \sim \text{Binomial}(n_i, p_i)\)`. Then $$ `\begin{align*} E(Y_i/n_i) &= p_i & \text{Var}(Y_i/n_i) = \frac{1}{n_i}p_i(1 - p_i) \end{align*}` $$ The variance function is `$$V(\mu_i) = \mu_i(1 - \mu_i)$$` Using a logit link maps the mean from `\((0,1)\)` to `\((-\infty,\infty)\)` `$$g(\mu_i) = \text{logit}(\mu_i)=\log\left(\frac{\mu_i}{1 - \mu_i}\right)$$` --- # Log-linear model Suppose counts `\(Y_i \sim \text{Poisson}(\lambda_i)\)`. Then $$ `\begin{align*} E(Y_i) &= \lambda_i & \text{Var}(Y_i) = \lambda_i \end{align*}` $$ The variance function is `$$V(\mu_i) = \mu_i$$` Using a log link maps the mean from `\((0,\infty)\)` to `\((-\infty,\infty)\)` `$$g(\mu_i) = \log(\mu_i)$$` --- # Generalized Nonlinear Models A generalized nonlinear model (GNM) is the same as a GLM except that we have `$$g(\mu) = \eta(x; \beta)$$` where `\(\eta(x; \beta)\)` is nonlinear in the parameters `\(\beta\)`. Equivalently an extension of nonlinear least squares model, where the variance of `\(Y\)` is allowed to depend on the mean. Using a nonlinear predictor can produce a more parsimonious and interpretable model. --- .pull-left-45[ # Example: Mental Health Status A study of 1660 children from Manhattan recorded their mental impairment and parents' socioeconomic status (Agresti, 2002) ```r library(vcd) mosaic(mentalHealth) ``` ] .pull-right-54-raise[ <!-- --> ] --- # Independence A simple analysis of these data might be to test for independence of MHS and SES using a chi-squared test. Equivalent to testing goodness-of-fit of the independence model `$$\log(\mu_{rc}) = \alpha_r + \beta_c$$` Such a test compares the independence model to the saturated model `$$\log(\mu_{rc}) = \alpha_r + \beta_c + \gamma_{rc}$$` which may be over-complex. --- # Row-column Association One intermediate model is the Row-Column association model: `$$\log(\mu_{rc}) = \alpha_r + \beta_c + \phi_r\psi_c$$` (Goodman, 1979), an example of a multiplicative interaction model. -- For the mental health data: ``` Analysis of Deviance Table Model 1: Freq ~ SES + MHS Model 2: Freq ~ SES + MHS + Mult(SES, MHS) Model 3: Freq ~ SES + MHS + SES:MHS Resid. Df Resid. Dev Df Deviance Pr(>Chi) 1 15 47.4 2 8 3.6 7 43.8 2.3e-07 3 0 0.0 8 3.6 0.89 ``` --- # <tt>gnm</tt> R package * Provides a framework for specifying, fitting and criticizing GNMs in R * Designed to be `glm`-like * common arguments, returned objects, methods, etc * equivalent results for linear predictors * For `family = gaussian` equivalent to `nls` fit. --- # Model Specification * Linear terms in the model may be specified in the usual way, e.g. ```r y ∼ a + b + a:b ``` * Nonlinear terms must be specified using functions of class `"nonlin"` * specify structure of term, possible also labels & starting values * provided functions: `Exp`, `Inv`, `Mult`, `MultHomog`, `Dref` * custom functions --- # RC model in <tt>gnm</tt> ```r RCmod <- gnm(Freq ~ SES + MHS + Mult(SES, MHS), family = poisson, data = mentalHealth, verbose = FALSE, ofInterest = "Mult") coef(RCmod) ``` ``` Coefficients of interest: Mult(., MHS).SESA Mult(., MHS).SESB Mult(., MHS).SESC 0.37314 0.37649 0.10062 Mult(., MHS).SESD Mult(., MHS).SESE Mult(., MHS).SESF -0.04575 -0.40728 -0.70431 Mult(SES, .).MHSwell Mult(SES, .).MHSmild Mult(SES, .).MHSmoderate 0.76609 0.07004 -0.05557 Mult(SES, .).MHSimpaired -0.63370 ``` --- # Parameterisation in (G)LMs The independence model was defined in an over-parameterised form: $$ `\begin{align*} \log(\mu_{rc}) &= \alpha_r + \beta_c \\ &= (\alpha_r + 1) + (\beta_c - 1) \\ &= \alpha_r^* + \beta_c^* \end{align*}` $$ `glm` will impose identifiability constraints, by default `\(\alpha_1 = \beta_1 = 0\)` --- # Parameterisation in (G)NMs In the Row-Column Association model $$ `\begin{align*} \alpha_r + \beta_r + \phi_r\psi_c &= \alpha_r + (\beta_r - \psi_c) + (\phi_r + 1)\psi_c\\ &= \alpha_r + \beta_r + (2\phi_r)(\psi_c/2) \end{align*}` $$ -- We need to constrain both location and scale for identifiability. -- `gnm` works with overparameterised models - will return one of infinite parameterisations at random - by default, nonlinear parameters are not identifiable: standard error `NA` - constrain parameters of interest, as required --- class: inverse middle # Case study: Diagonal Reference Model --- # Background Data from the ONS Longitudinal Survey, which links census and vital event data for 1% of the population of England and Wales. Objective is to study the effect of social mobility on long-term limiting illness (LLTI) > *a long-standing illness, health problem or handicap that limits a person's activities or the work they can do* Data include * National Statistics socio-economic classification (7 classes, high man/prof to routine) in 1991 (origin) and 2001 (destination). * Age, gender --- # Bartley & Plewis Models Bartley & Plewis (2007) modelled trajectories by combining social mobility effects with origin or destination effects. For example, the origin + mobility model includes the term $$ \theta_{ij} = `\begin{cases} \alpha_i + \delta_0 & i > j\\ \alpha_i + \delta_1 & i = j\\ \alpha_i + \delta_2 & i < j\\ \end{cases}` $$ where `\(\alpha_i\)` is the effect for origin `\(i\)` and `\(i > j\)` denotes moving to a more favourable destination class. --- # GLM mobility models The full models are logistic regression models for probability of LLTI in 2001. Since the mobility models are GLMs, they can be fitted with `glm`, e.g. ```r dest_m <- glm(llti ~ age1991 + origin + mobility, family = binomial, data = LS_m) ``` Age in 1991 was included as a covariate and men and women were modelled separately. --- # Social Mobility Effects The exponential of the mobility parameters gives the odds ratios of LLTI, e.g. for men <table> <thead> <tr> <th style="text-align:left;"> </th> <th style="text-align:right;"> Origin + Mobility </th> <th style="text-align:right;"> Destination + Mobility </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> To more favourable </td> <td style="text-align:right;"> 1.00 </td> <td style="text-align:right;"> 1.00 </td> </tr> <tr> <td style="text-align:left;"> Stable </td> <td style="text-align:right;"> 1.21 </td> <td style="text-align:right;"> 0.71 </td> </tr> <tr> <td style="text-align:left;"> To less favourable </td> <td style="text-align:right;"> 1.45 </td> <td style="text-align:right;"> 0.52 </td> </tr> </tbody> </table> * given origin, odds of LLTI increased by downward mobility * given destination, odds of LLTI decreased by downward mobility --- # Weighted Residuals The working residuals from the GLM fit can be averaged over each origin-destination combination to provide an indicator of fit for each trajectory These average residuals can be standardized to be approximately N(0, 1) assuming the model is correct. Passing a GLM fit to the `mosaic` function will produce a plot of the weighted residuals, with the p-value for a `\(\chi^2\)` goodness-of-fit test. --- .pull-left[ Origin + Mobility  ] .pull-right[ Destination + Mobility  ] Although two sides of the same coin, the two models capture different features of the data. --- # Diagonal Reference Model Sobel (1991) proposed the *diagonal reference model*, which combines origin, destination and social mobility effects $$ w_1 \gamma_i + (1 - w_1) \gamma_j $$ The effect of moving from class `\(i\)` to class `\(j\)` is a weighted sum of the diagonal effects `\(\gamma_i\)`, where `\(\gamma_i\)` is the effect for stable individuals in that class. This is a alternative to adding main effects of origin and destination. ??? strutured main effect --- # Dref in <tt>gnm</tt> A diagonal reference term may be specified via `Dref(fac1, fac2, ...)` The weights are constrained to be non-negative and to sum to one by defining them as $$ w_f = \frac{e^{\delta_f}}{\sum_i e^{\delta_i}} $$ and estimating unconstrained `\(\delta_f\)` parameters. --- <br> Following the previous analysis we fit the diagonal reference model with age as a covariate and fit models for men and women separately. ```r dref_m <- gnm(llti ~ age1991 + Dref(origin, dest), family = binomial, data = LS_m) coef(summary(classMobility)) ``` ``` Estimate Std. Error z value Pr(>|z|) (Intercept) -1.30795 NA NA NA age1991 0.06357 0.001221 52.08 < 2e-16 Dref(origin, dest)delta1 -0.05275 NA NA NA Dref(origin, dest)delta2 -0.53378 NA NA NA Dref(., .).origin|desthigh man/pro -3.74444 NA NA NA Dref(., .).origin|destlow man/pro -3.29767 NA NA NA Dref(., .).origin|destintermed -2.74320 NA NA NA Dref(., .).origin|destsmall empl/own ac -2.99956 NA NA NA Dref(., .).origin|destlow sup/tech -2.79887 NA NA NA Dref(., .).origin|destsemi-routine -2.47277 NA NA NA Dref(., .).origin|destroutine -2.27206 NA NA NA ``` --- # Diagonal Effects The model for the stable individuals is an ordinary logistic regression $$ \text{logit}(p_{iik}) = \beta_0 + \beta_1\text{age}_k + \gamma_i $$ Setting `\(\gamma_1 = 0\)`, the diagonal effects are log odds ratios of LLTI in class `\(i\)` against the first class for a given age $$ `\begin{align*} &\log\left(\frac{p_{iik}/(1 - p_{iik})}{p_{11k}/(1 - p_{11k})}\right) \\ =&(\beta_0 + \beta_1 \text{age}_k + \gamma_i) - (\beta_0 + \beta_1 \text{age}_k) \\ =& \gamma_i \end{align*}` $$ --- <br> ```r dref_m <- gnm(llti ~ age1991 + Dref(origin, dest), family = binomial, data = LS_m, constrain = "(high man)") coef(summary(classMobility)) ``` ``` Estimate Std. Error z value Pr(>|z|) (Intercept) -5.05239 0.063708 -79.305 < 2e-16 age1991 0.06357 0.001221 52.084 < 2e-16 Dref(origin, dest)delta1 NA NA NA NA Dref(origin, dest)delta2 NA NA NA NA Dref(., .).origin|desthigh man/pro 0.00000 NA NA NA Dref(., .).origin|destlow man/pro 0.44677 0.053225 8.394 < 2e-16 Dref(., .).origin|destintermed 1.00124 0.063136 15.858 < 2e-16 Dref(., .).origin|destsmall empl/own ac 0.74488 0.052400 14.227 < 2e-16 Dref(., .).origin|destlow sup/tech 0.94557 0.053376 17.715 < 2e-16 Dref(., .).origin|destsemi-routine 1.27167 0.053763 23.653 < 2e-16 Dref(., .).origin|destroutine 1.47237 0.049871 29.524 < 2e-16 ``` --- # Health Inequality <!-- --> --- # <tt>DrefWeights</tt> Setting `\(\delta_1 = 0\)` is enough to constrain the weights, so that $$ `\begin{align*} w_1 &= \frac{1}{1 + e^{\delta_2}} & w_2 &= \frac{e^{\delta_2}}{1 + e^{\delta_2}} \end{align*}` $$ `DrefWeights()` computes these weights and their standard errors, e.g. ```r DrefWeights(dref_m) ``` ``` $origin weight se 0.61799 0.02649 $dest weight se 0.38201 0.02649 ``` --- # Diluting Effect of Social Mobility The ratio of origin weight to destination weight quantifies the diluting effect of social mobility on health inequality * **1:0** social mobility has no effect on individual * **0:1** social mobility has no effect on inequality * otherwise social mobility increases P(LLTI) in the upper classes and decreases P(LLTI) in the lower classes. The larger the origin weight, the greater the diluting effect. ??? mobile assume probability of stable in destination class --- # Diagonal Weights The diagonal weights for the LLTI models are <table> <thead> <tr> <th style="text-align:left;"> </th> <th style="text-align:left;"> Men </th> <th style="text-align:left;"> Women </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> Origin </td> <td style="text-align:left;"> 0.62 (0.03) </td> <td style="text-align:left;"> 0.41 (0.03) </td> </tr> <tr> <td style="text-align:left;"> Destination </td> <td style="text-align:left;"> 0.38 (0.03) </td> <td style="text-align:left;"> 0.59 (0.03) </td> </tr> </tbody> </table> Since their destination class is given more weight, social mobility has a greater impact for women. --- # Model Comparison The models can be compared by the difference in deviance from the null model: <table> <thead> <tr> <th style="empty-cells: hide;border-bottom:hidden;" colspan="1"></th> <th style="border-bottom:hidden;padding-bottom:0; padding-left:3px;padding-right:3px;text-align: center; " colspan="2"><div style="border-bottom: 1px solid #ddd; padding-bottom: 5px; ">Men</div></th> <th style="border-bottom:hidden;padding-bottom:0; padding-left:3px;padding-right:3px;text-align: center; " colspan="2"><div style="border-bottom: 1px solid #ddd; padding-bottom: 5px; ">Women</div></th> </tr> <tr> <th style="text-align:left;"> </th> <th style="text-align:right;"> Deviance </th> <th style="text-align:right;"> Df </th> <th style="text-align:right;"> Deviance </th> <th style="text-align:right;"> DF </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> Origin + mobility </td> <td style="text-align:right;"> 4050 </td> <td style="text-align:right;"> 9 </td> <td style="text-align:right;"> 3194 </td> <td style="text-align:right;"> 9 </td> </tr> <tr> <td style="text-align:left;"> Destination + mobility </td> <td style="text-align:right;"> 4026 </td> <td style="text-align:right;"> 9 </td> <td style="text-align:right;"> 3273 </td> <td style="text-align:right;"> 9 </td> </tr> <tr> <td style="text-align:left;"> Diagonal reference </td> <td style="text-align:right;"> 4121 </td> <td style="text-align:right;"> 8 </td> <td style="text-align:right;"> 3312 </td> <td style="text-align:right;"> 8 </td> </tr> </tbody> </table> The diagonal reference model reduces the deviance the most despite requiring fewer degrees of freedom. --- class: inverse middle # Case study: Lee-Carter Model --- # Background Data from the Human Mortality Database, which provides open, international access to population and mortality data from 41 countries. Here we consider data on deaths for men aged 20 to 99 in Canada from 1921 to 2003. The data comprise the variables * `Year`: year death recorded * `Age`: age at time of death * `Deaths`: number of deaths * `Exposure`: number at risk model --- # Lee-Carter model Lee & Carter (1992) proposed a model for age-specific population mortality rates that has been the basis of many subsequent analyses. Suppose that death count `\(D_{ay}\)` for individuals of age `\(a\)` in year `\(y\)` has mean `\(\mu_{ay}\)` and *quasi-Poisson* variance `\(\phi\mu_{ay}\)`. *Lee-Carter model* $$ `\begin{align*} &\log(\mu_{ay}/e_{ay}) = \alpha_a + \beta_a\gamma_y\, ,\\ \Rightarrow & \log(\mu_{ay}) = \log(e_{ay}) + \alpha_a + \beta_a\gamma_y \end{align*}` $$ where `\(e_{ay}\)` is the *exposure* (number of lives at risk). --- # Lee-Carter in gnm The Lee-Carter model can be fitted with `gnm` as follows ```r LCmodel <- gnm(Deaths ~ Mult(Exp(Age), Year), eliminate = Age, offset = log(Exposure), family = "quasipoisson") ``` * `Exp(Age)` is used to constrain the parameters representing the *sensitivity* of age group `\(a\)` to have the same sign, * `Age` is *eliminated* since it will typically have many levels, * `offset` is used to add the log exposure with a coefficient of 1 * the `quasipoisson` family is used so that the dispersion parameter `\(\phi\)` is estimated rather than fixed to 1. ??? The eliminate argument of gnm is used to specify that the Age parameters replace the intercept in the model. This has two benefits: * gnm exploits the structure of these parameters to estimate them more efficiently * these nuisance parameters are excluded from summaries of the model object --- <br> Residuals are more dispersed for ages 25-35 and 50-65, as well as pre-1930 <img src="index_files/figure-html/res1-1.png" width="49%" /><img src="index_files/figure-html/res1-2.png" width="49%" /> --- Residuals by birth cohort (calendar year - age) show a clear trend <img src="index_files/figure-html/res2-1.png" width="75%" style="display: block; margin: auto;" /> --- # Renshaw & Haberman extension Renshaw & Haberman (2006) considered a cohort-based extension `$$\log(\mu_{ay}/e_{ay}) = \alpha_a + \beta^{(0)}_a \gamma_y + \beta^{(1)}_a \lambda_{y-a}$$` This is easy to specify in `gnm` ```r Deaths ~ Mult(Exp(Age), Year) + Mult(Exp(Age), Cohort) ``` But not so easy to fit! - `Year`, `Age` and `Cohort` are directly related - now have 405 multiplicative parameters, data for some cohorts is sparse ??? Haberman and Renshaw rejected the model on practical grounds --- # Fitting the Renshaw & Haberman model Step 1. Initially fit the model with age multipliers fixed to 1 `$$\log(\mu_{ay}/e_{ay}) = \alpha_a + \gamma_y + \lambda_{y-a}$$` Step 2. Fit the full model with starting values from step 1, without constraining the age multipliers. * Converges in 54 iterations * Find the multiplier for age 99 in the first multiplicative term is negative Step 3. Re-fit using `Exp(Age)` to constrain the age multipliers to be positive, setting `$$\beta^{(0)}_{99} = \exp(-1000) = 0$$` --- Residuals from Renshaw & Haberman model <img src="index_files/figure-html/res1b-1.png" width="49%" /><img src="index_files/figure-html/res1b-2.png" width="49%" /> --- <br> <img src="index_files/figure-html/res2b-1.png" width="75%" style="display: block; margin: auto;" /> --- # Age multipliers An advantage of re-parameterising the model such that $$ `\begin{align*} \beta^{(0)}_a &= \exp(\delta^{(0)}_a) & \beta^{(1)}_a &= \exp(\delta^{(1)}_a) \end{align*}` $$ is that simple contrasts (differences) of `\(\delta^{(n)}_a\)` are identifiable. We can obtain the contrasts and standard errors with `getContrasts()` ```r AgeMult2 <- pickCoef(APCmodel_GNM, "Mult(Exp(.), Cohort)", fixed = TRUE) getContrasts(APCmodel_GNM, AgeMult2, check = FALSE)$qvframe[1:3,] ``` ``` estimate SE quasiSE quasiVar Mult(Exp(.), Cohort).Age20 0.0000 0.0000 0.0810 0.006561 Mult(Exp(.), Cohort).Age21 -0.2361 0.1294 0.1027 0.010543 Mult(Exp(.), Cohort).Age22 -0.1666 0.1290 0.1021 0.010420 ``` ??? delta are log-multipliers the relative approximation errors for the quasi-variances of these contrasts range from −24% to 70.1%, so the quasi-variance approximation performs poorly in this example --- <br> <img src="index_files/figure-html/AgeMultipliers-1.png" width="75%" style="display: block; margin: auto;" /> ??? Figure shows a clear pattern in the log-multipliers for age in both terms, with the sensitivity of age initially rising until about 35 years of age, then decreasing with increasing age. However there is a lot of local variability with the estimates rising and falling in consecutive years and it is clear that the precision of the estimates decreases with age. The large uncertainty in the log-multipliers of the cohort effects for ages 85 and above is due to the early cohorts, for which we only have data at the end of the age range. --- # Spline model Define a piecewise linear spline over age: ```r Age_num <- as.numeric(as.character(Canada$Age)) Age_bs <- bs(Age_num, knots = c(seq(25, 70, by = 5), 80), degree = 1) ``` Use instead of age in the Renshaw & Haberman model: ```r Deaths ~ Mult(Exp(Age_bs), Year) + Mult(Exp(Age_bs), Cohort) ``` --- # Model comparison <table> <thead> <tr> <th style="text-align:left;"> Model </th> <th style="text-align:right;"> Deviance </th> <th style="text-align:right;"> Df </th> <th style="text-align:right;"> Percent </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> Lee-Carter </td> <td style="text-align:right;"> 32423 </td> <td style="text-align:right;"> 6399 </td> <td style="text-align:right;"> NA </td> </tr> <tr grouplength="3"><td colspan="4" style="border-bottom: 1px solid;"><strong>Age-Year-Cohort models</strong></td></tr> <tr> <td style="text-align:left;padding-left: 2em;" indentlevel="1"> Main effects </td> <td style="text-align:right;"> 24730 </td> <td style="text-align:right;"> 6318 </td> <td style="text-align:right;"> 0.00 </td> </tr> <tr> <td style="text-align:left;padding-left: 2em;" indentlevel="1"> Spline </td> <td style="text-align:right;"> 12486 </td> <td style="text-align:right;"> 6293 </td> <td style="text-align:right;"> 83.71 </td> </tr> <tr> <td style="text-align:left;padding-left: 2em;" indentlevel="1"> Renshaw-Haberman </td> <td style="text-align:right;"> 10104 </td> <td style="text-align:right;"> 6159 </td> <td style="text-align:right;"> 100.00 </td> </tr> </tbody> </table> The spline model preserves the year and cohort multipliers which could be modelled using time series methods for forecasting as in Renshaw & Haberman (2006) - only depends on the *differences* between multipliers. ??? so it is not necessary to impose constraints on these parameters --- class: inverse middle # Wrap-up --- # Finding out more Case studies with reproducibility materials: [github.com/hturner/gnm-case-studies](https://github.com/hturner/gnm-case-studies) - So far Lee-Carter and UNIDIFF - Diagonal Reference and Rasch models to be added - Working paper with D. Firth and I. Kosmidis in progress See the `gnm` vignette for further examples Also [github.com/hturner/gnm-half-day-course](https://github.com/hturner/gnm-half-day-course) and [github.com/hturner/gnm-day-course](https://github.com/hturner/gnm-day-course) --- # References Agresti, A. 2002. Categorical Data Analysis. 2nd ed. New York: Wiley. Bartley, M, and I Plewis. 2007. [10.1111/j.1467-985X.2006.00464.x](https://doi.org/10.1111/j.1467-985X.2006.00464.x). Goodman, L A. 1979. J. Amer. Statist. Assoc. [10.1080/01621459.1979.10481650](https://doi.org/10.1080/01621459.1979.10481650). Lee, R D, and L Carter. 1992. J. Amer. Statist. Assoc. [10.1080/01621459.1992.10475265](https://doi.org/10.1080/01621459.1992.10475265). Renshaw, A, and S Haberman. 2006. Insur Math Econ [10.1016/j.insmatheco.2005.12.001](https://doi.org/10.1016/j.insmatheco.2005.12.001). Sobel, M. E. 1981. Amer. Soc. Rev. [10.2307/2095086](https://doi.org/10.2307/2095086).